EVA Release v2.6

EVA v2.6: Understanding Situations More Deeply, Enabling Clearer Operational Decisions

EVA v2.6 is a release that begins with a real operational question: "How should detection results be interpreted, and which alerts should be handled first?" As accurate detection performance has become the baseline, what matters more in the field today is the clarity of operational judgment—questions such as "Is this alert truly critical?" and "Does this situation require immediate action?" This v2.6 update goes beyond what AI sees, focusing instead on how operators understand and utilize detection results.

- Clearer prioritization criteria as the number of alerts increases

- A structure that accumulates detection results as operational metrics, not just isolated incidents

- Detection that understands the flow of a situation, not just a single image

- Selectable detection processes that do not treat all scenarios equally

v2.6 represents a step forward for EVA—from a simple detection system to an AI operations platform that supports operator decision-making.

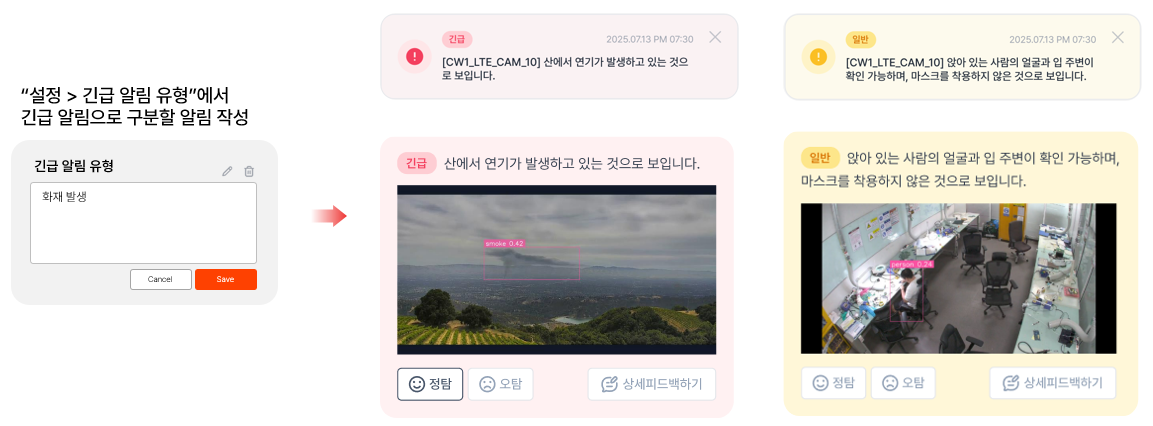

Emergency Alert Settings: Making Critical Alerts Impossible to Miss

As the number of scenarios increases, so does the volume of alerts. When all alerts are displayed with the same level of importance, operators are once again forced to rely on human judgment to determine priorities. To reduce this burden, v2.6 introduces a feature that allows text-based definition of alert types requiring urgent response. Alerts designated as emergency alerts are clearly distinguished from general alerts, enabling operators to immediately recognize situations that must be checked first—even among a large number of notifications.

As alerts increase, what matters is not the number of alerts, but which alert requires action first. Emergency alert settings in v2.6 make operational judgment criteria clearer and more actionable.

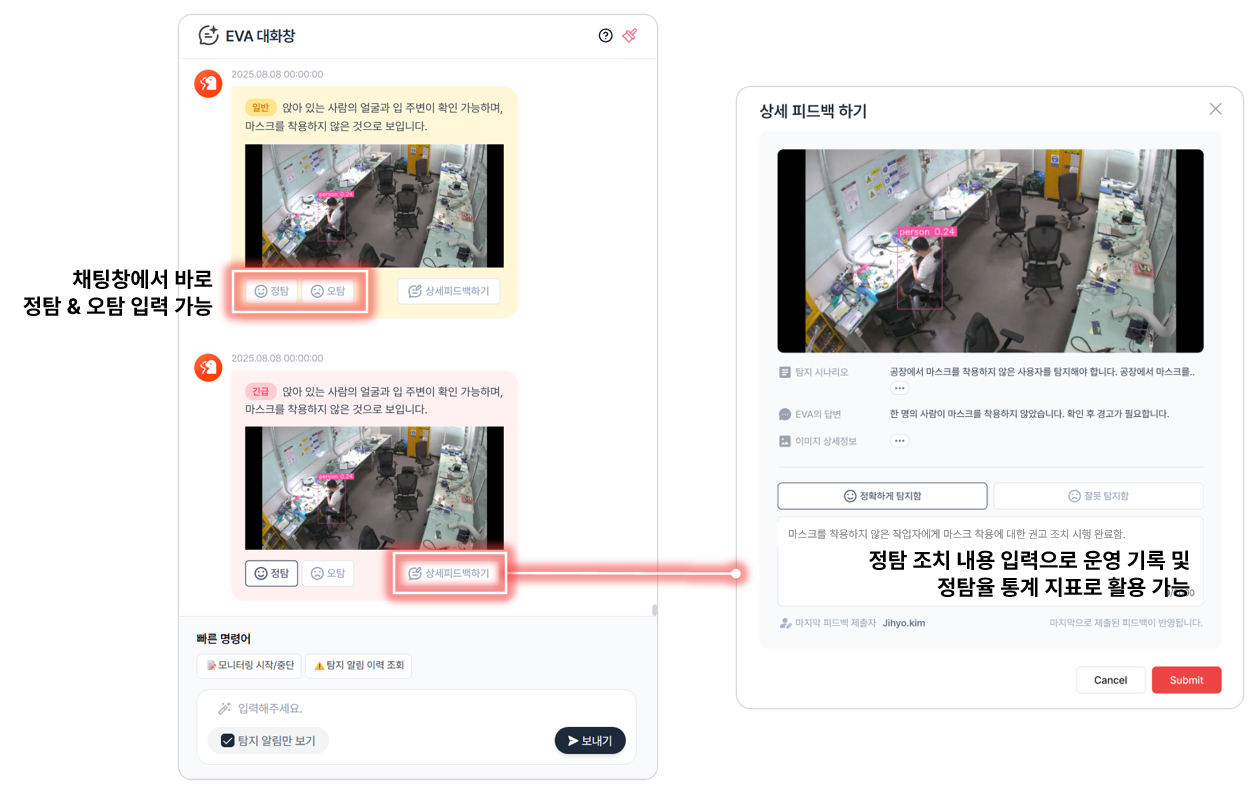

Expanded False/True Detection Feedback: Managing Feedback as Operational Records and Metrics

Previously, feedback could only be recorded for false detections. In v2.6, feedback can now be entered for true detections as well. Operators can explicitly record whether each detection result is false or true, and this data is accumulated and managed as scenario-level true detection rate metrics.

As a result, detection results evolve from simple event logs into quantitative data used to evaluate and improve operational quality.

In addition, feedback history functions as an operational record, allowing teams to trace which alerts were actually reviewed and what decisions and responses were made. UI improvements also ensure that operators can immediately see whether feedback has been entered directly from the alert list in the chat window—without navigating to a detail page.

Main Screen Improvements: Only the Information Operators Truly Need, Presented More Intuitively

The main screen is where operators spend most of their time. In v2.6, the list structure has been reorganized based on actual operational workflows. Information with low immediate decision-making value—such as model names or detection targets—has been removed. Instead, columns have been restructured around operation-focused information, including alert severity, recent alert summaries, scenario summaries, and webhook configuration status. This enables operators to grasp what is happening right now and what actions are required at a glance, allowing for faster and more confident responses.

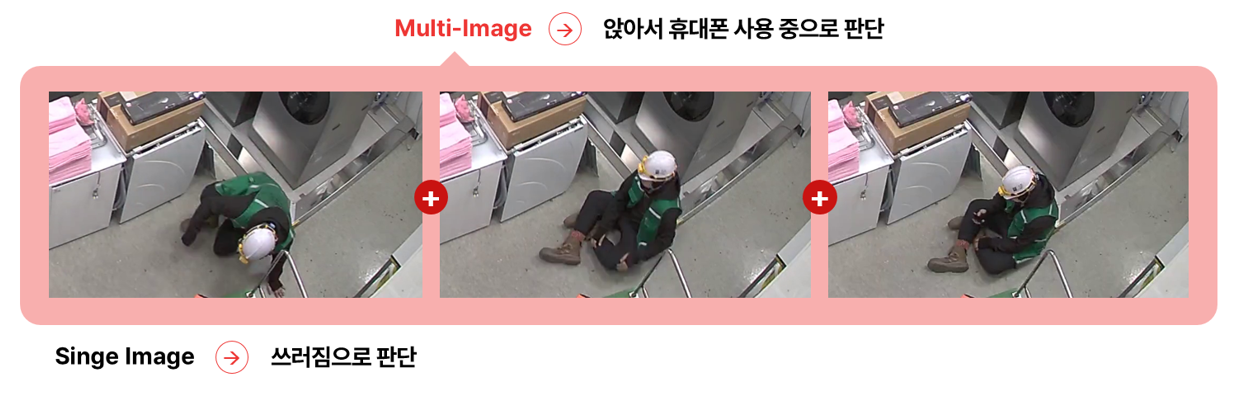

Multi-Image-Based Detection: Understanding Situations as a Flow, Not a Single Image

Most real-world situations do not occur in a static moment, but within a sequence of continuous changes. Traditional single-image-based detection evaluates only a single frame, making it difficult to accurately assess scenarios where context before and after an action is critical.

For example, a person lying on the floor in a gym could be stretching, resting, or experiencing a real medical emergency.

This distinction is difficult to determine without considering preceding and subsequent frames. To address this limitation, v2.6 introduces multi-image-based detection. By analyzing multiple consecutive images together, EVA can comprehensively assess motion flow and state changes. This significantly improves detection accuracy for scenarios where the process of an action—such as intrusion, assault, or collapse—is essential.

EVA is no longer reacting to isolated moments, but evolving toward an approach that understands how situations unfold over time.

💡 For a detailed explanation of the technical design and decision logic behind multi-image-based detection, please refer to our Tech Blog.

→ Tech Blog | Multi-Frame-based VLM Detection: Beyond Single-Image Limitations Toward Temporal Context

VM Only Detection: When Faster Alerts Matter More Than Complex Interpretation

Not all detections require complex scenario interpretation. In some environments, "the presence of a specific object" alone is immediately meaningful.

Previously, EVA followed a pipeline of Vision Model (VM) → Vision Language Model (VLM), performing scenario interpretation after object detection. While effective for complex judgments, this approach could introduce unnecessary latency for simpler scenarios.

To address this, v2.6 introduces a new VM Only detection process. Alerts are triggered immediately upon object detection, bypassing the VLM stage and thereby improving both detection speed and resource efficiency.

For example, in scenarios such as "Notify me when an ambulance appears," faster responses are possible through object detection alone, without complex interpretation. Operators can now choose detection methods based on scenario characteristics—distinguishing between cases where accurate interpretation is critical and those where rapid response is the priority.

EVA is not simply a system that generates alerts.

It aims to be an AI operations platform where operator judgment and experience are continuously accumulated.

v2.6 most clearly represents this direction.

Beyond improving detection accuracy, it enhances operational judgment flows, prioritization, records, and metrics together.

EVA will continue to evolve to better understand complex real-world environments and support operators in making faster, clearer decisions.

Your usage experiences and feedback are the most important foundation for taking EVA to the next level.

🚀 Coming Soon: A Preview of v2.7

- Scenario-based automatic detection configuration

- Polygon-based detection area settings

- Change history alerts for detection scenarios and targets